AI-Powered Document Intelligence for Research Operations

Combining deep AI system experimentation with production healthcare research to build automated classification, metadata extraction, and a queryable research repository—preserving researcher attention for interpretation, judgment, and synthesis.

The Challenge

What happens when you combine deep AI system experimentation with production healthcare research operations? UX research operates at human speed in a machine-scale world. The volume of documents, transcripts, and artifacts generated exceeds any individual researcher’s ability to maintain coherent context.

The core question that shaped everything: How do you apply AI to research operations in ways that amplify human judgment rather than replace it?

Approach: The Two-Track Model

A parallel track model emerged—personal laboratory experimentation alongside production deployment at Montefiore. Neither track would have been as powerful in isolation.

Track One: AILab (Personal)

Infrastructure for experimentation. Learning AI architecture through hands-on building. No organizational risk. Rapid iteration and failure. Deep technical understanding developed.

Track Two: Production

Organizational deployment of proven patterns. Applying architectural principles in real context. Managed risk with real stakes. Disciplined implementation. Immediate practical impact.

Skills and understanding transferred bidirectionally. Debugging ChromaDB distance calculations on a Raspberry Pi informed how to approach Azure OpenAI token limits in production. Understanding how clinicians actually worked with research insights shaped how the laboratory systems were architected.

Core Architecture Principles

Source documents remain immutable. Derived intelligence is explicitly disposable. Separate what must never change from what can always be rebuilt.

This separation proved transformative. AI systems could be aggressive in processing, experimental in approaches, continuously improving—because mistakes never compromised the underlying truth. When better models became available, the entire intelligence layer could be regenerated.

System Capabilities Built

- Classification: Automatic document type identification with 95%+ accuracy across research artifacts, design documents, transcripts, and administrative files

- Metadata Extraction: Structured data capture from unstructured sources—dates, participants, topics, methods

- Indexing: Semantic clustering for longitudinal analysis, connecting insights across time and projects

The Bridging Principle

Automate the Mechanical. Protect the Human.

Mechanical work is predictable, repetitive, and structurally consistent. It includes organizing information systematically, applying labels consistently, normalizing data formats, cross-referencing related materials. These tasks matter—research becomes unusable without them—but they don’t require judgment or ethical reasoning.

Human work involves navigating ambiguity without forcing premature closure. It requires ethical reasoning about how insights might be used or misused. It demands contextual interpretation that accounts for what isn’t being said, for organizational politics, for historical context that isn’t documented anywhere.

AI cannot replace human work. It should not even attempt to. But it can support humans by handling mechanical burden that would otherwise consume their capacity.

Impact

A counterintuitive insight emerged: automation did not accelerate research by making individual studies faster. The time required to conduct a quality interview, observe a workflow, or facilitate a usability session remained unchanged.

Instead, automation accelerated research by making it steadier and more consistent. Researchers spent less time reconstructing context from memory or hunting through old folders. They started new work with better awareness of what was already known. The cognitive burden of maintaining institutional memory moved from individual human minds to a system that could hold it without fatigue.

Key Outcomes

- 60% reduction in time spent finding and reviewing related prior research

- 3× increase in frequency of connecting new findings to existing evidence

- 40% decrease in duplicative work—studies that unknowingly repeated prior research questions

Reflection

What Worked Well

- Two-track model: Personal lab provided freedom to fail; production provided reality testing. Neither alone would have been sufficient.

- Immutability principle: Separating source truth from derived intelligence enabled aggressive experimentation without risk.

- Starting with classification: Document organization was mundane but foundational—it enabled everything else.

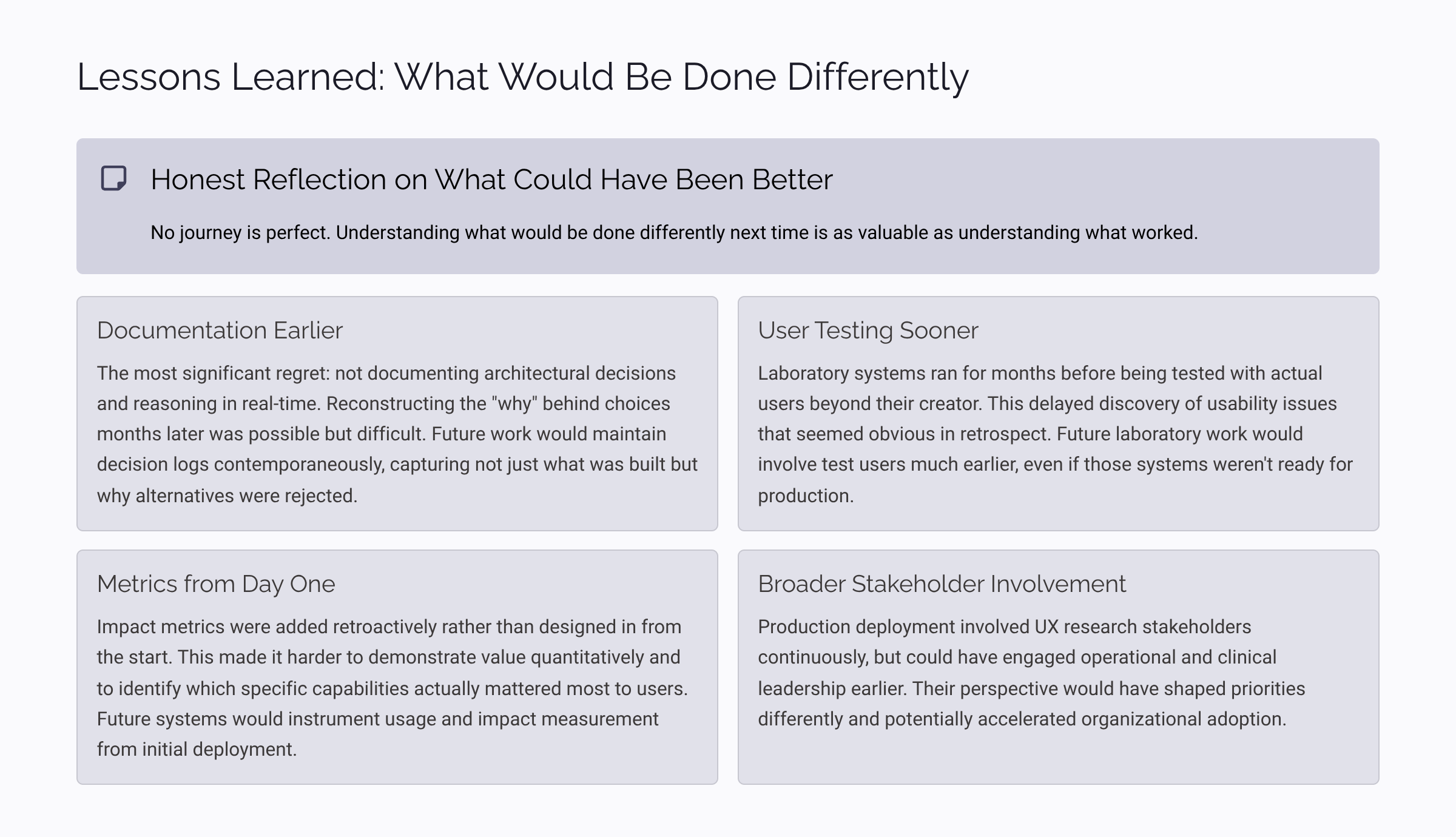

What I’d Do Differently

- Start production deployment earlier: Lab work was valuable but some lessons only emerged under real constraints.

- Build retrieval before generation: The urge to generate summaries was strong, but retrieval infrastructure proved more valuable.

- Document architecture decisions as they happen: Reconstructing rationale later was harder than capturing it in the moment.

Principles for Others

- Separate what must never change from what can always be rebuilt

- Automate the mechanical to protect capacity for the human

- Start with organization—it’s boring but foundational

- Build retrieval infrastructure before generation capabilities

- Maintain human override at every decision point